If you like Tom Whites Hadoop the definitive guide book, you will be more excited and satisfied to try out the code yourself. It is possible that you can use Ant or Maven to copy the source code into your project and configure it yourself. However, the low hanging fruit here might be just use git to clone his source code into your local machine and it will almost work out of box. here I took a few screen shots loading his code in Eclipse environment and hopes and be helpful.

1. Get Source Code.

Tom’s book source code is hosted in github, click here. You can submit issues or ask the author himself if you have further questions. I git cloned the project into my Eclipse workspace – a brand new workspace called EclipseTest.

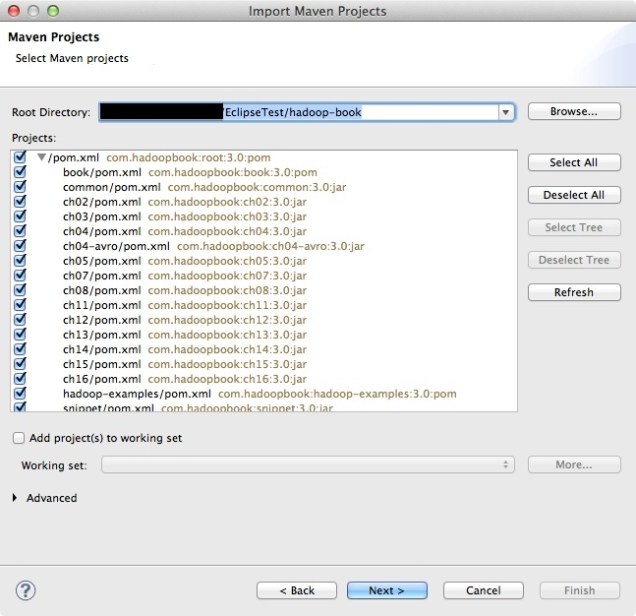

2. Load Existing Maven Project into Eclipse.

Then you need to open up eclipse, and click File -> Import -> Maven -> Existing Maven Projects. Since every chapter could be a separate maven project and I imported the whole book, every chapters and also the tests&example code for sake of time.

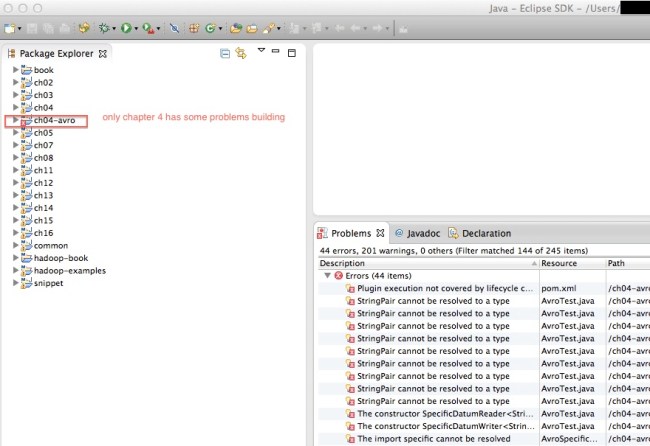

When you try to load the maven projects, it might report errors complaining missing plugins .etc. Give it a quick try if you can just simply find the solution in Eclipse market place to make the problem go away, if not, then just keep importing with errors. In my case, I was missing maven plugin 1.5..etc. which lead to a situation that I have some problem building chapter4 only.. However, that is good enough for me since I can at least get started with other chapters or examples.

I also took a screen shot of the output file so you can have an brief idea of how the output should look like.

3. Run Code.

Now you can test any examples that built successfully within Eclipse without worrying about environment. For example, I am reading Chapter7. Mapreduce types and formats which he explained how to subclass the RecordRead and treat every single file as a record. And he came up with a paragraph of code to concatenate a list of small files into sequence file – SmallFilesToSequenceFileConverter.java. I already run the start-all.sh from the hadoop binary bin folder. And I can see the hadoop services(Datanode, Resource Manager, SecondaryNameNode..etc.) are currently running. You need to configure the Java Run Configuration, so the code knows where to go for the input files and so does for the output files. After that you can just click run, and bang! code finishes successfully.