This is a wonderful github project from Lewis Zhang to create a Docker image with Selenium server properly set up. However, as time goes by, the version of the Selenium server jar file is out dated and I want to help modify it to the latest version and contribute that back to the project. Here is a documentation of how to do that as a new registered github user.

Monthly Archives: October 2014

AWS – S3 The home of data storage.

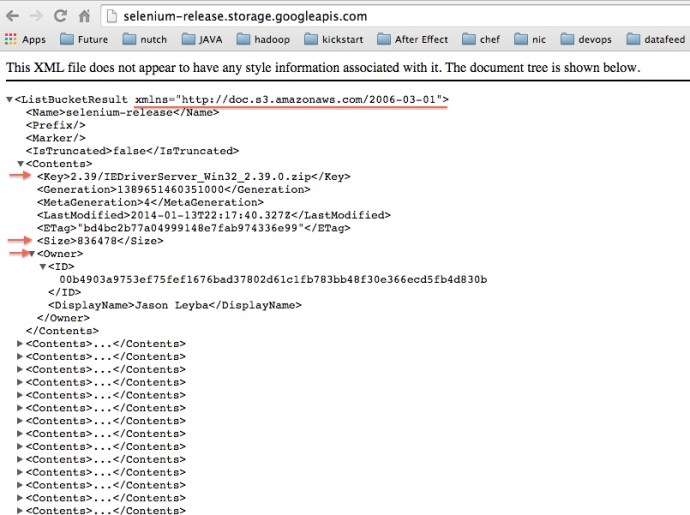

When I tried to download Selenium standalone jar file. I realized that they it is not a simple Apache file system. For example, the URL looks like:

http://selenium-release.storage.googleapis.com/2.44/selenium-server-standalone-2.44.0.jar

And if you go xxx.com/2.44, there is no content, but you go one level up, it will return you an interesting XML file. Obviously the part after the com/ is the key in the XML file and it is hosted by Amazon S3.

The reason that Google uses S3 instead of old-school Apache directory is because they have more options around security and permission. Probably down the road, I need to learn how to use S3 to share file with the public with customized permissions.

Here is more information about the S3 Rest API.

Selenium – Server vs Web Driver

I have been using selenium webdriver for a while and in the past, I happened to always run the code on the same machine with the browser. For example, I tested the code on my mac.. and I can see the browser on my mac… then I copied the code to a server, set up firefox on the server and run the code there… Today, I was diving into the nuts-and-bolts of Selenium Grid and I had a much better understanding of Selenium Server. Selenium server is basically a selenium instance running on a box which will listen to the requests from a certain port, it could be local requests or external ones.

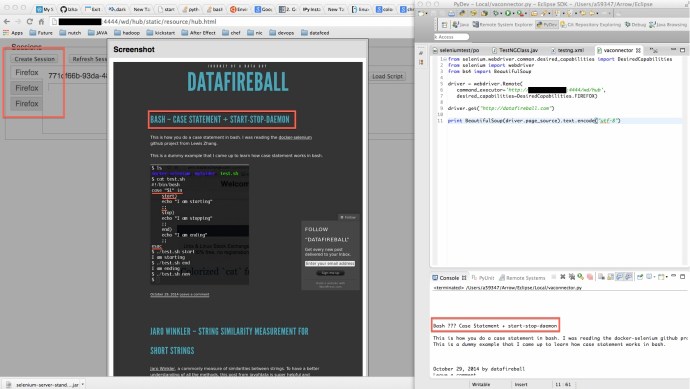

Here is a screen shot of a Selenium server running on AWS machine and also a Python script running on my local which makes request to the AWS selenium server.

Bash – Case Statement + start-stop-daemon

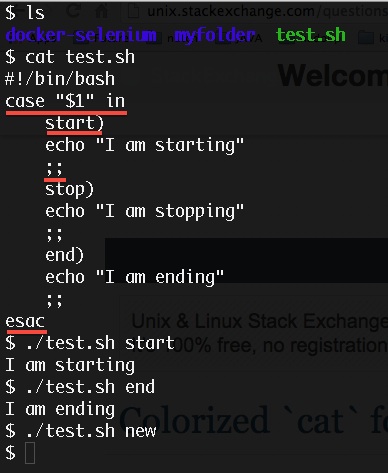

This is how you do a case statement in bash. I was reading the docker-selenium github project from Lewis Zhang.

This is a dummy example that I came up to learn how case statement works in bash.

Jaro Winkler – String Similarity Measurement for short strings

Jaro Winkler, a commonly measure of similarities between strings. To have a better understanding of all the methods, this post from joyofdata is super helpful and informative, also cirrius.

To start with any string similarity measurement, we need to talk about the basis of metric that we gonna use to quantify the similarities. The most commonly used one is Levenshtein distance(1965), and the distance behind Jaro Winkler needs the understanding of a different one called Jaro Distance.

In a nutshell, the Levenshtein distance is the number of operations(substitute, insert, delete) to transform one string into another. And the Jaro distance cannot be easily communicated without looking at the definition below.

d_jaro = 1/3 * ( m/|s1| + m/|s2| + (m-t)/m )

When we say two characters from each string matches, we mean they are the same letter and the difference between position is no more than floor(max(|s1|, |s2|)/2) – 1. Therefore, m is the number of matching characters and t is half the number of matching characters (but different sequence order like TE vs ET).

Then Jaro Winkler distance built a logic on top of Jaro distance which added some weight if they have the same prefix.

d_jaro_winkler = d_jaro + L * p * (1-d_jaro)

where L is the length of common prefix at the beginning of the string up to 4. p is a scaling factor not exceed 1/4. And the standard value is 0.1. I basically read through the explanation from Wikipedia here and try to repeat it in my own word.

They claimed that the Jaro Winkler distance is designed and best suited for short strings such as person names and it has a fixed scale from 0 to 1(perfect match).

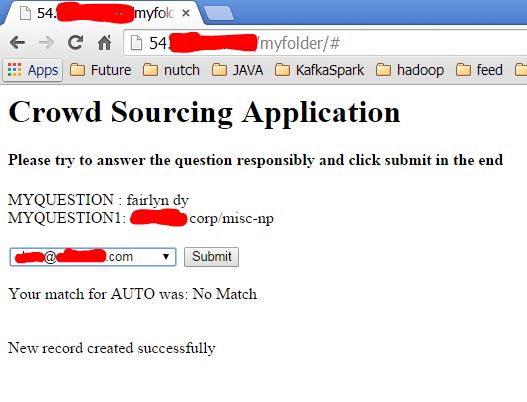

Crowd Sourcing (Crowd Voting) – a PHP template

I ran into a problem that: given a first name, I need to match the most likely email address from a given list of possible emails.

For example: given a first name like Mike, out of a list of all the email like binwang@datafireball.com, glenna@datafireball.com, mmarshall@datafireball.com, mikemarshall@datafireball.com…etc. I think mikemarshal@datafireball.com is the mostly likely one to be the correct match.

You can apply some algorithms to calculate the similarity between the first name and each email address. However, there are some situations where mmarshall@datafireball.com is better than binwang@datafireball.com and glenna@datafireball.com.. simply because of the tradition of use the first letter of first name in combination with last name. So on and so forth, simply using one algorithms might not work that well.

Since the amount of work is not too big to do manually… however, it is too big for ME manually. I decided to divide and conquer. So I broke each name mapping into a question and make it accessible through browser by using some basic php.

You can access the source code of the php from my Github repo, you might need to reverse engineer and guess how the MySQL table should look up based on your use case.

Here is a screen shot of how it looks like:

When the user submits the response, I not only document what his choice is, but also get the user agent, ip address and a cookie that I assigned to that user to track that user. So later down the road, some machine learning could be done to distinguish the irresponsible user from the informed and careful attendees.

Bloom Filter

Implementation: python-bloomfilter, demo

This is a pure art a javascript library to visualize how bloom filter works, it was a library developed by Jason Davies.

Cloudera – Cloudera Search Flume + HBase + SolrCloud

Need to look at Cloudera Search. Solr and Elastic Search are both awesome tools and they can really scale to fit in the big data world by evolving into SolrCloud and ElasticSearch Cluster. I set up Elastic Search Cluster on top of Hadoop and it was really easy to use. However, the downside is:

(1) It is a bit hard to admin, since we are using CDH, it might just be easier that you can monitor all the animals at one place – Cloudera Manager.

(2) When you need to index the data, you have to turn that into JSON, and move from HDFS to local and write some Python code to index it, at least that was my way to do it.

I know long time ago that Cloudera has solr built in but I never really looked into it. Today, I did some research and it seems like that they have some decent architecture that work right out of box. You can have the data flowing through flume to dump into your hdfs environment, stored in HBase, and you can index the data there from Solr in the Hue environment. If everything works as they claimed to be. The whole structure might be really stable and awesome! Your team might not need another 200K to hire an expensive big data programmer and those few tools will just do what you want out of box. 🙂

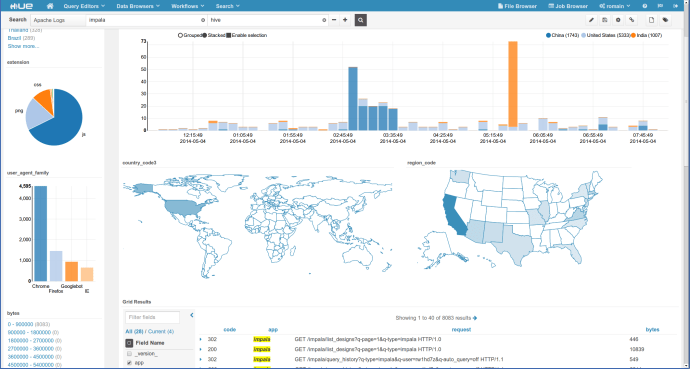

For the front end, Kibana is also another reason that why I like Elastic Search, also they have Silk which is an equivalent of Kibana but for Solr, and in the newer version of Hue, they have some pretty kickass dashboard where you will have your bar chart, pie chart and favorite map.

Sublime – Difference from a plain text editor

I started using Sublime text since a long time ago and the only reason i love using that text editor is it has the syntax highlighting. Now, I happened to find a few features from sublime text that will save me tons of time.

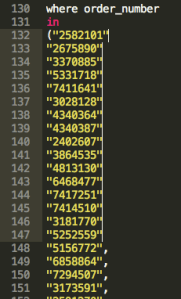

(1) Edit Multiple Lines.

Select Block of Text, “Shift + Command + L” and you are now able to edit multiple lines at the same time.

Python – Find Combinations – Itertools.combinations

The question starts with how to find all the possible combinations for the elements in a given list. And I found the Python solution here. Of course, Python has already some built-in function to take care of it. We have to use the combinations function from library itertools to do it.

However, combinations function will only be a proxy as the C(3,n), so it will only return an interator with all the combinations that for a given length. To find all the combinations of the a set, we need to loop through all the possible length and add the possible combinations to the result.

There are also a few interesting functions in itertools like permuations and group_by etc, which are all super useful.