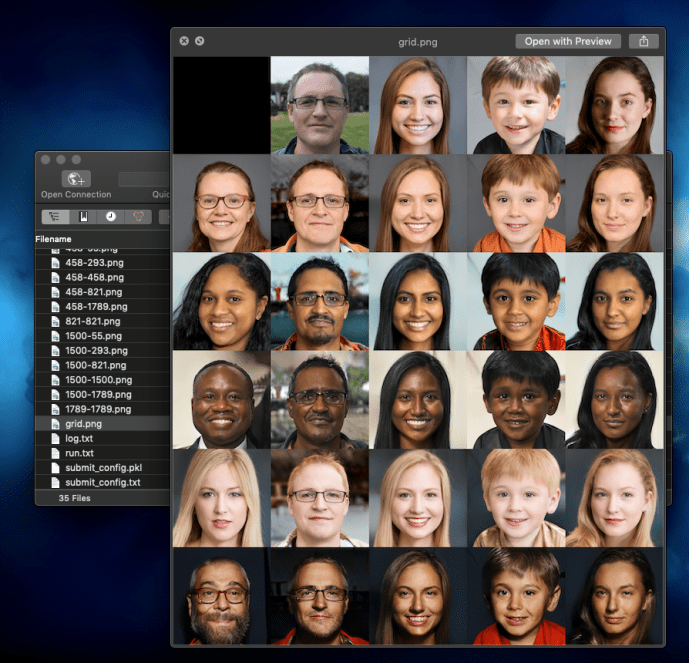

First, here is the proof that I got stylegan2 (using pre-trained model) working 🙂

Nvidia GPU can accelerate the computing dramatically, especially for training models, however, if not careful, all the time that you saved from training can be easily wasted on struggling with setting up the environment in the first place, if you can get it working.

The challenges here is that there are multiple nvidia related enviroment like the basic GPU driver, CUDA version, cudnn and others. For each of those, there is also different versions which you need to be careful about making sure they are consistent. That itself is already some pain that you want to go through. Last but certainly the least fun, is getting tensorflow itself not only working, but getting the tensorflow versions in alignment with the CUDA environment that you have, at the same time, using the tensorflow with the project that you likely did not write yourself but forked some other person’s github project. The odds of all of those steps working seamless will add no value to you as someone who just wanted to generate some images and serve no purpose but becoming frustrated.

I know that lots of the Python users out there use anaconda to manage their Python development environment, switching between different versions of Python at will, maintaining multiple environment with different versions of tensorflow if you want, and sometimes even have a completely new conda environment for each project just to keep things clean. In the world of getting github project up and running fast, I guess that workflow is not enough. There is much more than just Python so in the end, a tool like Docker is actually the panacea.

If you have not used Docker that much in the past, it is as easy as memorizing just a few command line instructions to start and stop the Docker container. This Tensorflow with Docker from Google is a fantastic tutorial to get started.

For stylegan2, here are some commands that might help you.

sudo docker build - < Dockerfile # build the docker image using the Docker file from stylegan2 sudo docker image ls docker tag 90bbdeb87871 datafireball/stylegan2:v0.1 sudo docker run --gpus all -it -rm -v `pwd`:/tmp -w /tmp datafireball/stylegan2:v0.1 bash # create a disposal working environment that will get deleted after logout that also map the current host working directory to the /tmp folder into the Docker sudo docker run --gpus all -it -d -v `pwd`:/tmp -w /tmp datafireball/stylegan2:v0.1 bash # run it as a long running daemon like a development environment sudo docker container ls sudo docker exec -it youthful_sammet /bin/bash # connect to the container and run bash command "like ssh"

I assure you, the pleasure from getting all the examples run AS-IS is unprecedented. I suddenly changed my view from “nothing F* works, what is wrong with these developers” to “the world is beautiful, I love the open source community”.

You can use Docker to not only get Stylegan running, you can get the tensorflow-gpu-py3 working, and not meant to jump the gun for the rest of the development world, I bet there are plenty of other people who struggle to environment set up can benefit from using Docker, and there are equally amount of people out there who can make the world a better place by start his/her project with a docker image knowing that no one in the world, including the developer himself, how the environment is configured.

Life is short, use [Python inside a docker] 🙂

Thanks! I am new in Linux but still managed to run StyleGan2 in docker with your help. I have some questions;

I built docker image using Stylegan2 docker file. Everytime i restart my computer i do following:

docker run –gpus all -it -d -v `pwd`:/tmp -w /tmp tensorflow/tensorflow:1.15.0-gpu-py3 bash

docker exec -it (container id) /bin/bash

pip install scipy==1.3.3

pip install requests==2.22.0

pip install Pillow==6.2.1

1.Is there way to not lose my docker environment after restart?

2.Docker file which i built image already contains scipy, request, Pillow but when exec my environment i need to install them again all the time.

Thanks for your help.

What exactly is your Dockerfile to create a working Stylegan2 docker image? I can build one and copy over the code, but it claims not to have CUDA installed, even when passing through the GPUs using “`–gpus all“`