Concurrent.futures is a module that started being included in Python since the release of version 3.2. That being said, in most of the Python 3 versions, like 3.5, 3.6 or newer, it is a standard library just like os and system that is readily available.

It has the benefit of “democratizing” parallel processing and the developers no longer have to understand, or write tens of lines of code for the basic setups that usually comes with multithreading or multiprocessing.

Without further ado, let’s start with an example by simulating how much time could be saved.

Preparation

Here we have a very basic function, it takes some input, do something and return a result.

In this case, to simulate the time saving, we intentionally let the function sleep for i seconds to mimic the behavior of delay due to computing, network, io, etc. Also for analysis purpose, we captured the thread_id, the time the function got started, ended, the input and output (a simple square).

Baseline – Synchronous

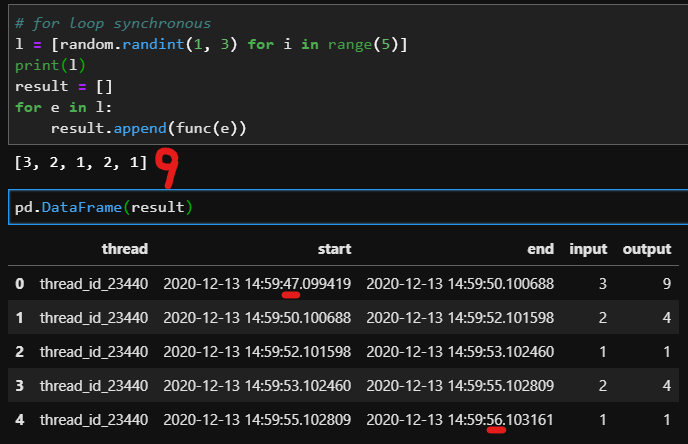

Let’s start with a base line where we randomly generate a list of tasks and loop through tasks one by one.

In this test, we generated 5 tasks with different delays, and the total task should take 9 seconds to complete. By executing them sequentially, we captured the output and also displayed in the form of a pandas dataframe. As expected, we have 5 rows that corresponds to the 5 tasks. And all 5 tasks were executed by the same thread (23440) and the total time difference, the delta between when the first task get started and the last one got finished is 9 seconds.

This is very typical for most processing tasks, many tasks that are similar but are fairly independent, maybe each of the tasks is to read in some files and process it, or take a url and collect the HTML and transform it.

In this visualization, we can see that all tasks are handled by one worker and the word is done sequentially or synchronously.

Map

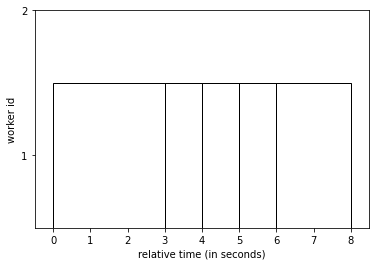

This is a very basic yet powerful script to show how to use concurrent.futures. First, we start by creating an executor that we are currently using ThreadPoolExecutor which you can also use ProcessPoolExecutor if you want. Rule of thumb is to use multithread when it is io blocked but use multiprocessing when it is cpu bound.

Here, we actually specify the worker or the number of thread to be 1 just to get the syntax working first. Here we are calling a method of executor called map which does all the heavy lifting. It actually took the task list and IMMEDIATELY distribute the task to the executor.

As you can tell, the code works, it does exactly what we did above and the execution plan looks virtually the same as the baseline.

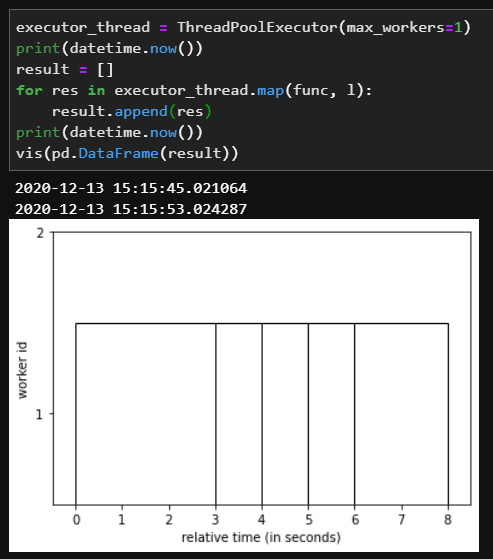

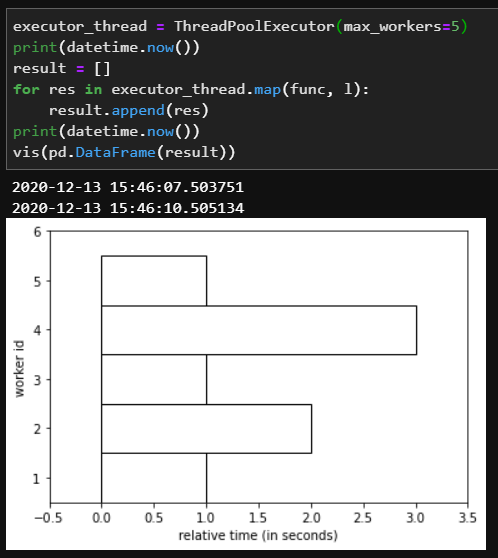

Now let’s modify the number of workers to be 2. There is where we start seeing parallel processing working in action. The moment one worker took on the 3 seconds task. A different worker took on a different task of 1 sec. And after that task got finished, it acknowledges and took on another one, so on and so forth.

The total execution time now is only 5 seconds which cut the total execution time in about half (9 seconds). An interesting observation is that theoretically, you can further improve the total execution time by having two separate workers handle the 3 seconds and 2 seconds and it should cut the total time to 4, but that is another problem.

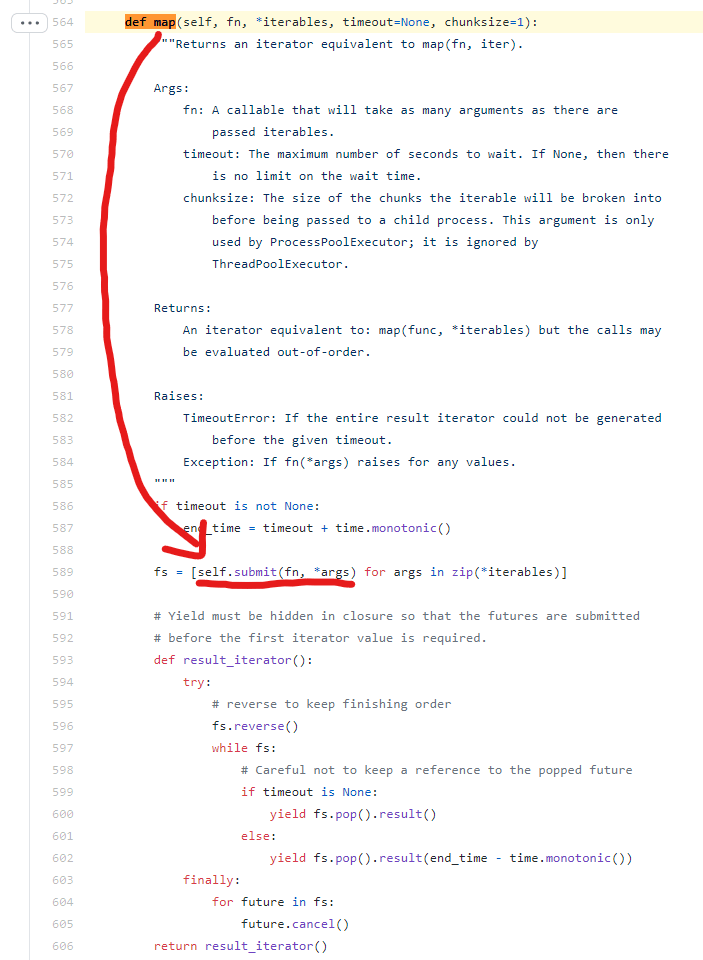

You can read more about the map function either from the documentation or the source code directly.

Of course, to see the full power of concurrent.futures, the total execution time can be minimized to 3 seconds when we provide enough workers.

Submit

So what is “submit”? IMHO, one will use submit more often than map in real life so this is probably the function that you should familiarize yourself more. By looking into the source code of map function, it is actually calling submit behind the scene.

It is actually very simple to use, in addition, you can pass on *args and **kwargs which gives you more flexibility to it.

The key difference between map and submit is that calling with map will retain the order when accessing the futures while calling with submit + as_completed will loop through the objects as they were being yielded.

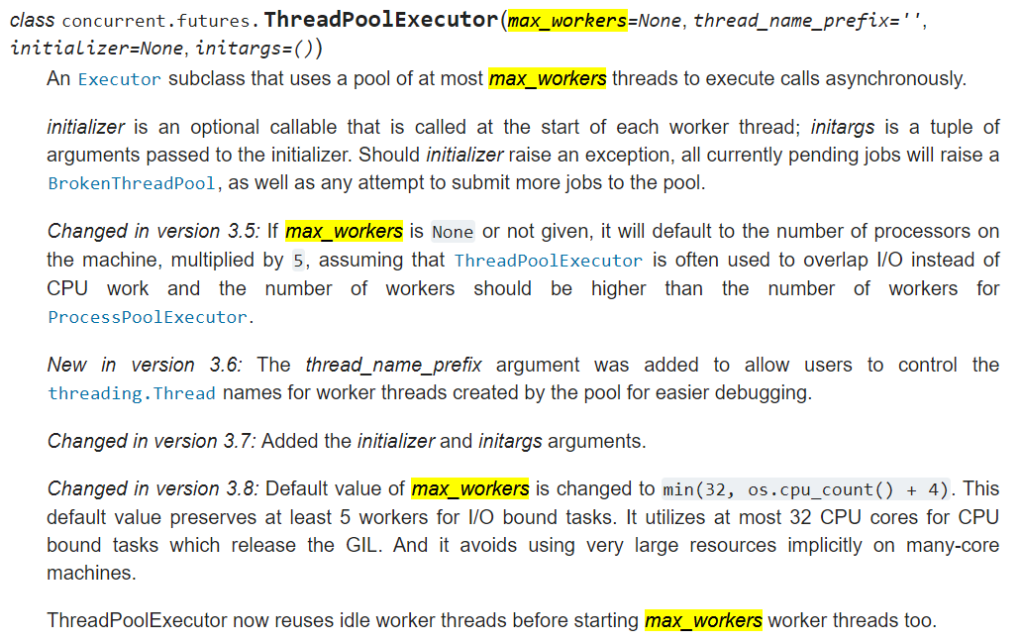

max_workers

Speaking of the number of workers, the obvious answer is the more, the merrier. However, for any given machine, the computing resources are usually limited, the number of CPUs and the number of threads available.

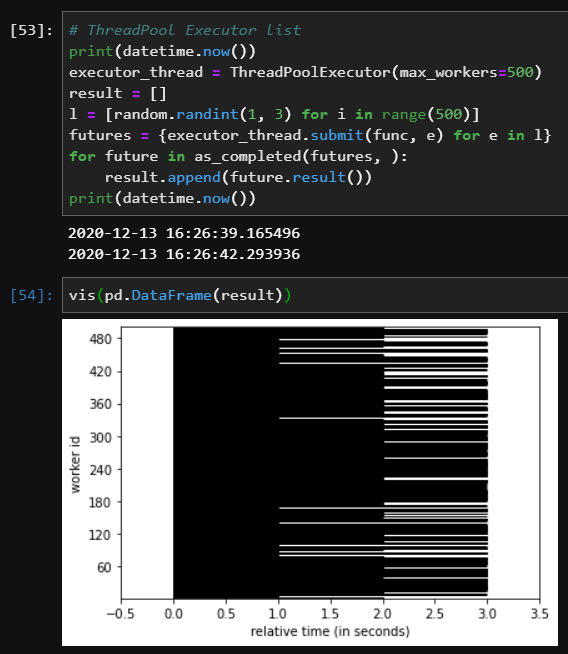

In this case, I have created 500 tasks and requested 500 workers and they seem to work fine.

In the threadpoolexecutor, if you don’t specify max_workers, there will be some default values being applied which you can find more information from the documentation.

For example, in the latest Python 3.8 as indicated in the documentation. os.cpu_count() return 12 from my DELL XPS, so the default max_workers will be min(32, 12 + 4) = 16 workers. Based on some reading, people say there are limited amount of physical threads and the introduction of virtual threads can virtually be as large as you want, however, when it approaches the limitations of physical threads, there won’t be any marginal benefits as you increase the number of threads / max_workers.

Conclusion

In this blog post, we have covered several basic usages of concurrent.futures.ThreadPoolExecutor and you should be ready to save some execution time by leveraging all those beautiful threads. However, there are more to it rather than simply distributing lots of work.

Some key topics that worth pursuing in the future:

- how to collect all the results and persist making sure it is thread safe

- when should you use multithreading and when should you use multiprocessing? how do you know that

- how to configure timeout and handle the error properly

- other aspects of the concurrent.future library