When you consider deploying your model into production, a key step is to understand the resource requirement. It is not only the machine learning prediction part, but everything that goes inside the container. This requires us do some profiling and understand the performance of the container under different scenarios of load.

Here we are going to profile the container which has the local deployment of the sample AzureML project – diabetes prediction which is a ridge model.

The concept is simple. We artificially generate some requests, test the performance under different resource allocation – how long it took to serve these requests. If the computing resource is insufficient, your container will be slow. On the other hand, if the resource is over allocated, your performance won’t improve further and extra resource will only be a waste of money.

The first question will be how to create the load. Ideally, you will want to the payload to be as close to production requests patterns as possible. For example, if you reuse the same payload and only change the number of requests, there could be activities like caching that skew your tests results (your code looks faster in testing but act slower in production). And at the same time, the true performance of a web server can hardly be measured synchronously. You can also make asynchronous calls to maximize the throughput.

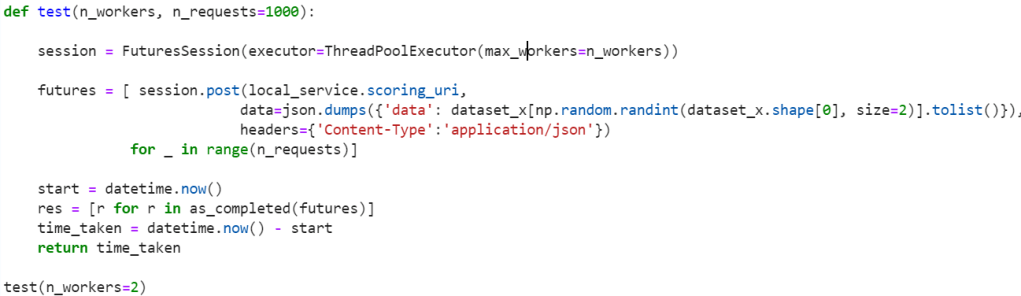

Here is a small test script to demonstrate the idea. You can pass in the number of workers and the total number of requests for a test, and the final returned value is how long it took to finish all these requests, ideally the shorter, the better.

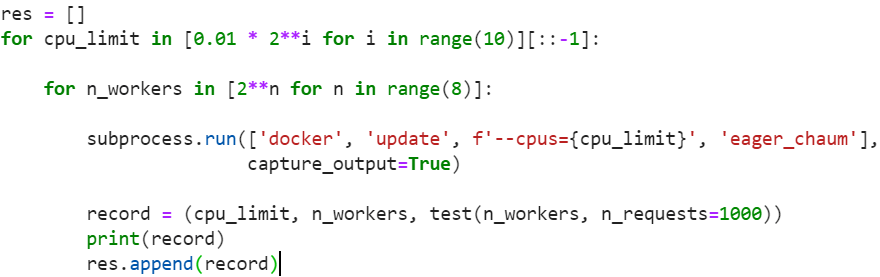

After you have a test script, the next step is to try out different configurations and take some measurements.

In this test, I am only changing different cpu_limit assigned to the containter.

cpu_limit is a threshold that your container at most can consume. There are many other parameters could limit the actual cpu resource available to your container. For example, setting cpu_limit to be 24 doesn’t guarantee any type of cpu resource available. In a typical laptop, you might have only 4 cores in the first place. In that case, setting cpu_limit to be 24 makes no sense. Another is competing activities, if there are other activities during the testing, the cpu available to your container could take another hit.

During the test, if the payload is low, and your application is perfectly capable of handling it, you might not reach either the physical and cpu_limit assigned to it. During the stress test, you probably want to avoid other irrelevant activities so the cpu resource available is sufficient and it is only the cpu_limit that you apply actually dominates.

In my testing script, I generated a list of cpu_limit starting as small as 0.01 cpu ~ 10 millicpu. (I actually started with large cpu assignment because they finish faster). And for each cpu_limit, I tried out different number of workers to simulate the behavior of required concurrency.

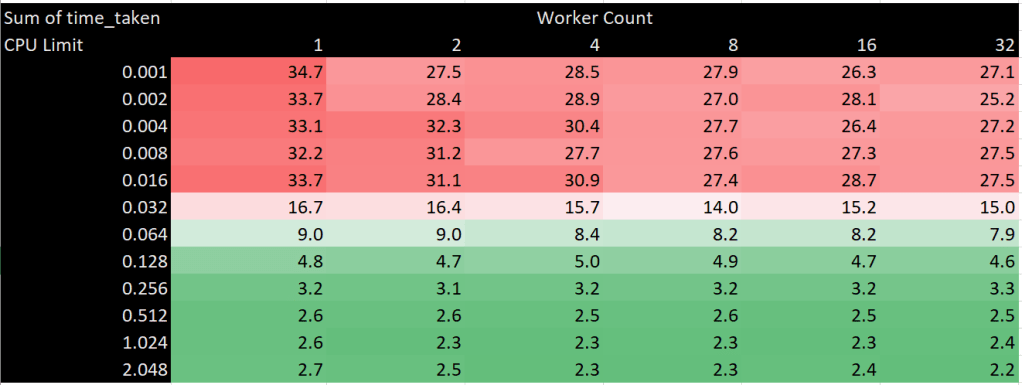

Following is the result of the first test, instead of 1000 calls per test, I used 100 calls just to be fast. It is quite straight forward that the more CPU resource you assign to it, the better the performance. For example, when you assign 0.512 cpu, it took only less than 3 seconds to make a 100 calls. And by giving it more cpu, the performance is not further improved. Meanwhile for the worker count, the performance is improvement is not obvious as you expected. One imagine that if the tasks is io bounded, having two workers will double the throughput etc.

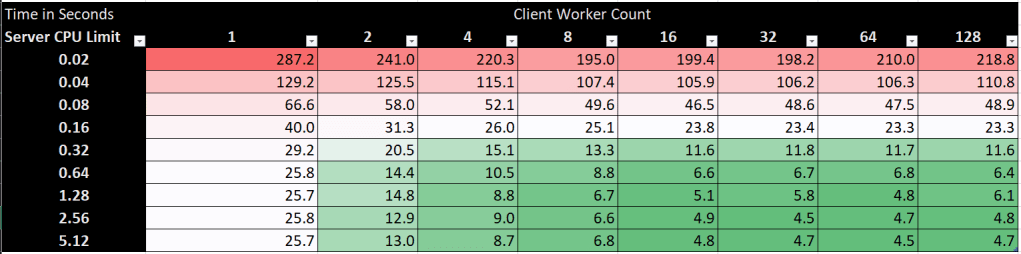

In the next test, I increased the number of calls from 100 to 1000. And you can immediately see the performance improvement from the client side. When you assign sufficient cpu, the performance will keep improving from the client side the more workers you assign, until no more.

How surprising! The more resource we give it, the faster we get? 🙂 This is like a typical optimization problem that for a fixed amount of CPU resources, how to size the container so we can handle the most throughput.

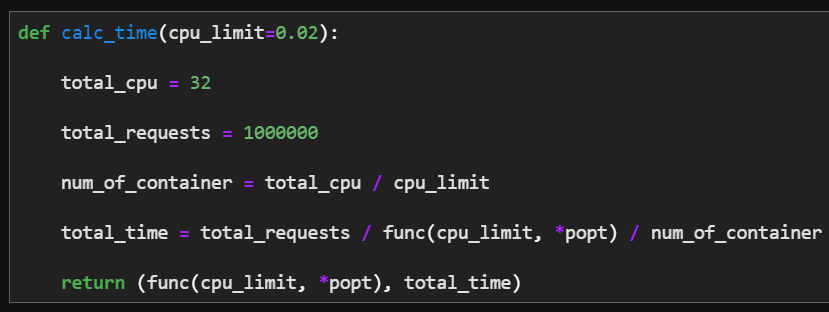

Let’s do some math, say if we have total TOTAL_CPUS, and each cpu is being sized with the cpu_limit as SIZE. Then we can afford TOTAL_CPUS/SIZE number of containers without running out of resources. Based on the our choice of SIZE, we will be able to understand how many requests each container can handle.

In order to find the optimal point, let’s first model the response time versus the cpu_limit. We can fit a curve to it. In this case, I picked the exponential decay curve and it works pretty well.

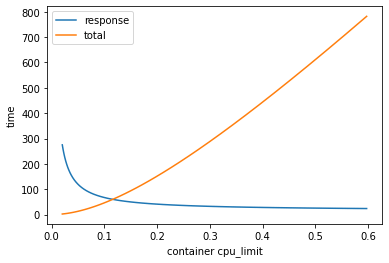

My take away is that the smaller you can make a container, actually the more efficient it is despite the fact that it will be slow. As you can see from the blue line, the latency is getting better and better as you throw more cpus to it, but that limit the total number of containers that you can fit into an environment, and the overall total time almost grow linearly.

Rather than find the optimal point, this is almost like a greedy theory in which you just need to understand what is the acceptable SLA, and almost work backwards and chose the minimal amount of cpu to guarantee that SLA. In addition to that, any extra cpu assignment won’t help at all.