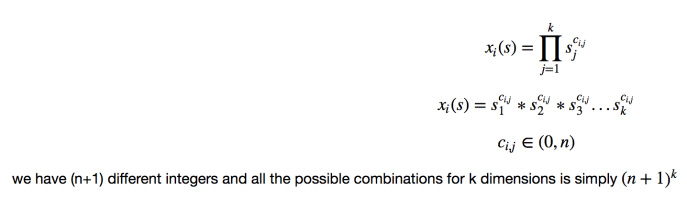

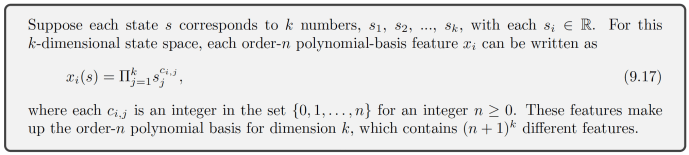

In Sutton’s book “Reinforcement Learning – An introduction” draft 2017 Nov Chapter 9.5.1. The Author discussed a scenario where one can construct features for a linear model using “interactions” between different dimensions of a state.

It is a very short math equation but not quite straightforward to fully comprehend. Let’s raise a few examples to help understand this equation more intuitively and hopefully we can understand why there are (n+1)^k different features.

Let’s assume that we have three dimensions in the state space, like the physical spatial position of an object. In this case, k=3. Let’s assign different values to n starting from value 0 and see how that math equation unfolds as we grown n.