Have you ever ask the question to yourself: “what is really going on behind the command hdfs -copyFromLocal”, how it split the file into chunks and store it in different data node?

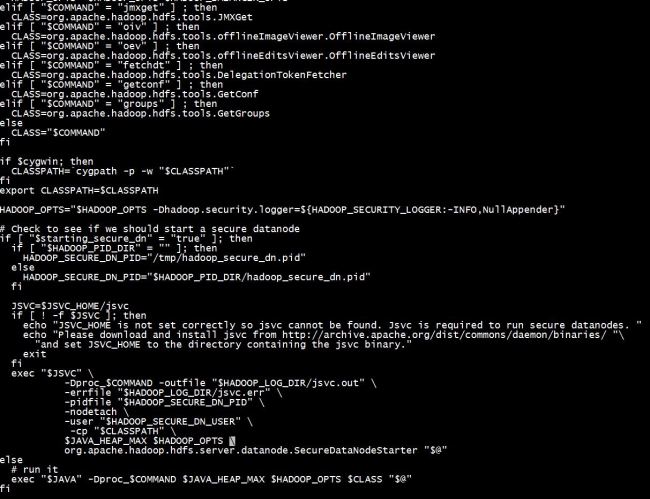

To answer that question, you need to start with the command `hdfs`. After you find the code of hdfs shell command, it will look like something similar like this:

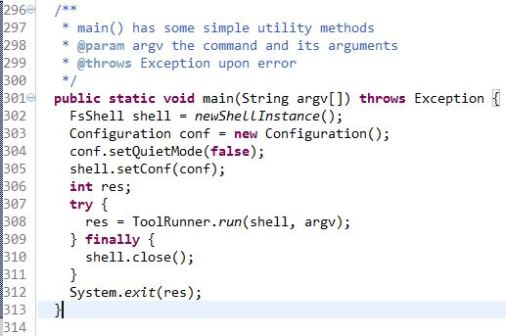

That was the last part of the code, and it basically run a Java class corresponding to your shell sub command after hdfs. For example, the command that we are interested in “copyFromLocal” belongs to the subcommand dfs. And dfs maps to “org.apache.hadoop.fs.FsShell”. And if you go to the directory – shell, which is in the same folder as FsShell, you will see most of the commands that you are familiar with actually maps to a separate Java class. ‘Mkdir.java’ to ‘dfs -mkdir’, …. I took a quick look at the source code of FsShell.java.

As you can see, depending on your command, the shell instance (client) will read in the local hadoop configuration and set up a job based on the configuration. Then run the corresponding command.To me, there are a few haunting terms that how it end up mapping to the corresponding Java class, say ‘Mkdir.java’..etc. It is usually CommandFactory and also an abstract class to describe command ..etc, which I don’t understand at this stage and I will jump straight to the CopyCommands.java.

As you can see, the commands CopyFromLocal and CopyToLocal are actually the wrapper of Put and Get. And if you trace down the path, you will find the Put command is actually using IOUtils.copyBytes. It is interesting to see in the end, real world usage will end up in the function that is belongs to the `utility tool kit`. So far, I am still confused that how does the job knows how to break the files down to chunks and which chunk sent to where.. I guess it might be determined by the filesystem configuration file and the jobclient make all the magics happen. In another way, behind that one liner ‘ToolRunner.run’, all the coordination happens smartly. And that might be the next focus point of learning the Java source code of Hadoop.

Hi,

My hadoop version is 0.20.203. I know that CopyFromLocal method extends Put method in the higher version, such as yours. I also try to find the extend relationship in hadoop 0.20.203, but none.

So, I am confused about how “hadoop fs -put” implemented the same function as “hadoop fs -copyfromlocal” in hadoop 0.20.203? Why I can’t find the implementation method of “hadoop fs -put” in hadoop(0.20.203) FSShell.java. Furthermore, I can’t find its implementation in the whole hadoop project, but I can use it to upload file to HDFS file system.