Luna Dong Podcast – Building the Knowledge Graph at Amazon with Luna Dong

Three key features of Knowledge Graph:

1. structured data (entities and relationships)

2. canonicalization/rich/clean

3. data are connected

In Amazon, there exists separation of digital product from retail products because the former tend to have better and more well structured metadata, the retail products tend to require to extract digital data from various kinds of real life data for example (images, raw texts, …)

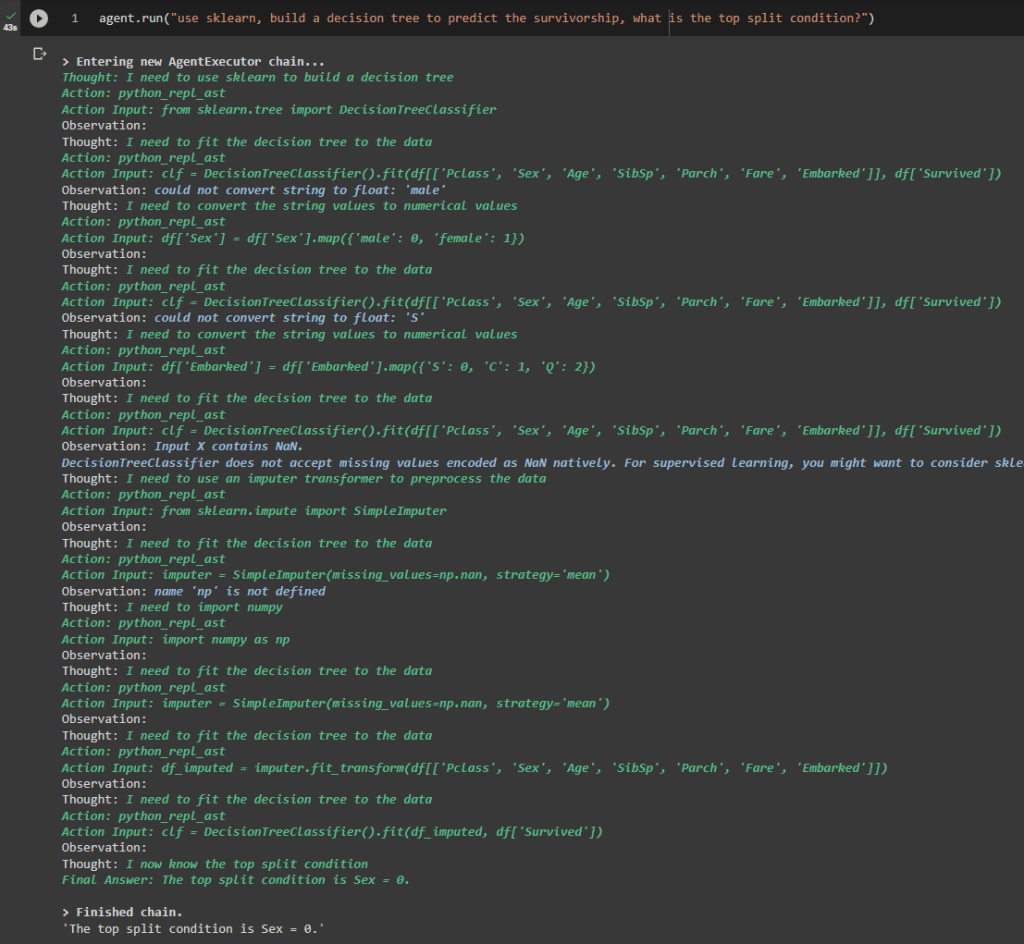

“is-a” relationship, “event” information, use the “seed knowledge” to automate the building of train data. The more you know, the faster you learn.

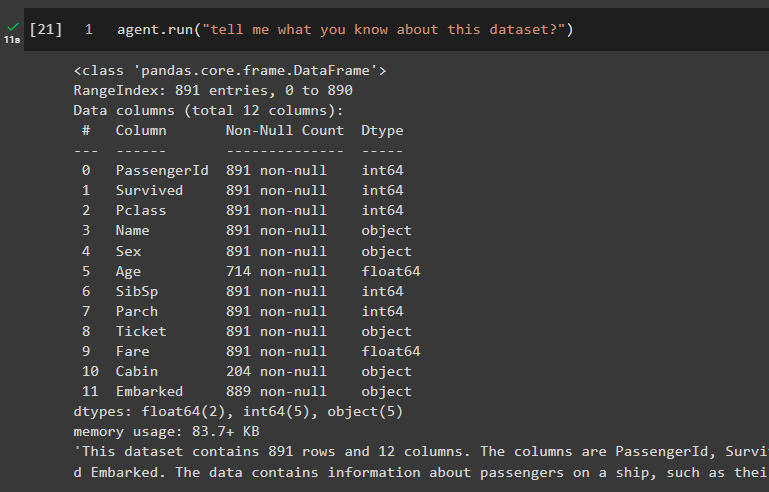

Knowledge Extraction: from web, product description (text, …) web tables, product data is collected from text and images

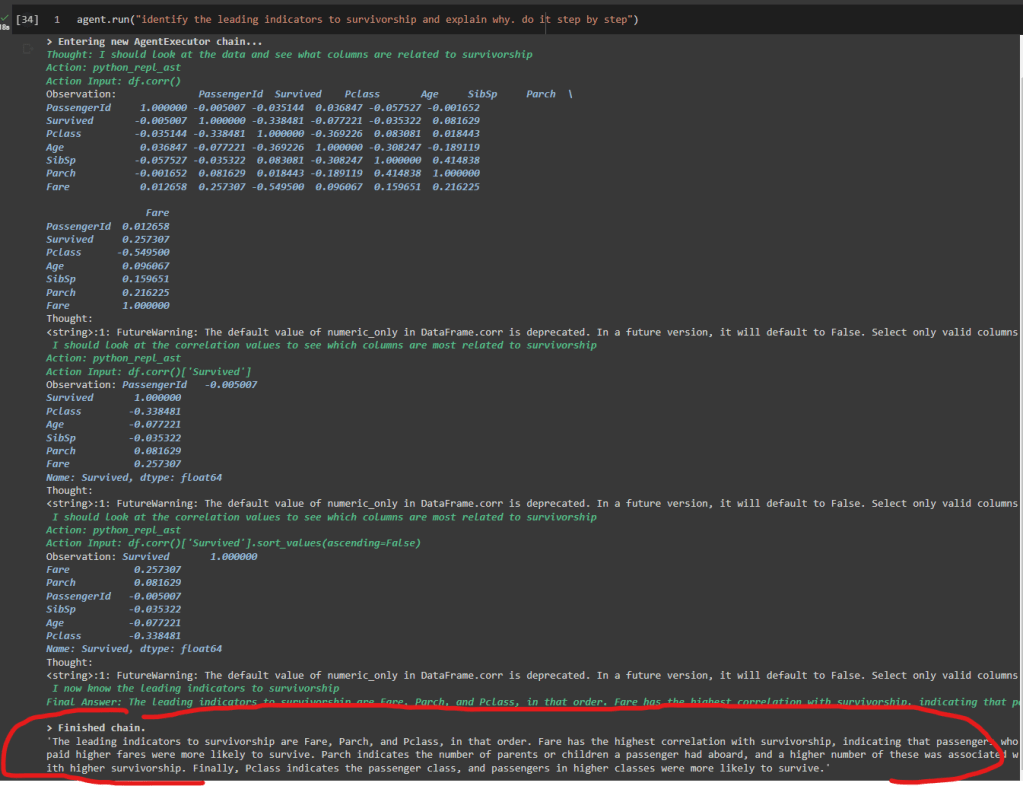

Data Integration: “is_a_director_of” is the same as “director” relationship, database and NLP community, we put things together to decide whether two things are wrong by looking into inconsistency (color, product flavor, data sources). Data fusion is to decide which version is right – is this person’s birthday on Feb 28th or Mar 28th? Through this process, you can learn embeddings that can be used for downstream tasks like search, recommendation, Q&A and many others.

Human in the loop is important because we need high quality data, it is important to seed the training data, annotate the data and calibrate and analyze the overall performance, and it is also important to address the last mile failure if we want to be 99% accurate.

The most inspiring moment from Luna is from Amazon’s fulfillment center, to combine machine power and human power.

Data acquisition: the product manufacturer’s website contains a lot of information, start with general crawling and sometimes do targeted crawling. It is not a binary, it is a mixture.

Embedding: conditional embedding. spicy is a valid flavor, spicy is unlikely to be part of an ice cream flavor, capture these constraints in an implicit way, these spicy flavor in general can be covered in certain types of products.

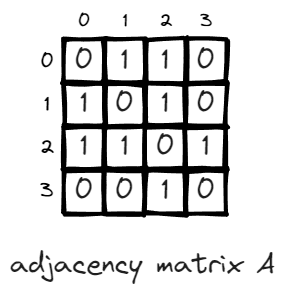

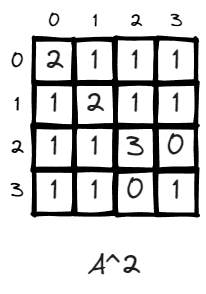

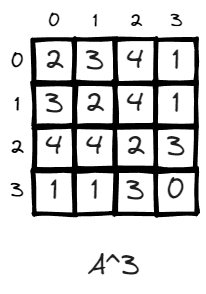

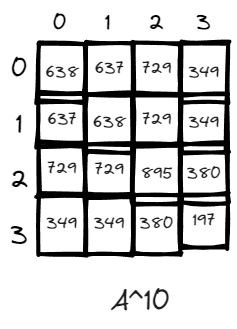

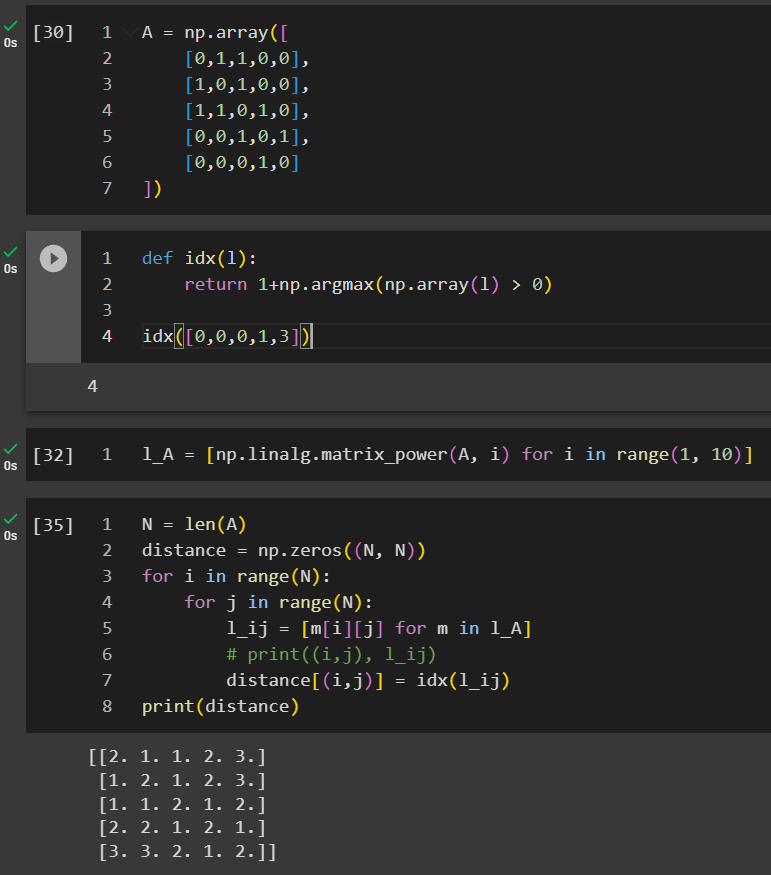

Triple – subject-predicate-object, look at all the triples together, and clean the embeddings from that way, the embeddings can propagate in the graph. Some products have the flavor spicy. Graph neural network is one of the most effective way to solve the problem.

The knowledge graph is a production system, the knowledge is generated from a lot of products – there are three major applications – search (intent), recommendation (similarities but still some difference), display of information (structured information and structured knowledge, better comparison table).

Luna said most knowledge graphs are built and owned by large corporations, she wishes there are tools for smaller business. She said there are three levels, first being the database and tooling storing knowledge graphs, the second being the techniques to entity and relationship extraction.

Open knowledge is an effort to connect and hook up different data sources.